Calculation group

A calculation group applies one DAX pattern to every measure in your model. You write YTD, MTD and YoY% once instead of repeating them for e...

Read definitionA data warehouse is a central database that collects data from many source systems and structures it for reporting and analysis. It's optimised for fast reads and aggregation across large volumes of historical data.

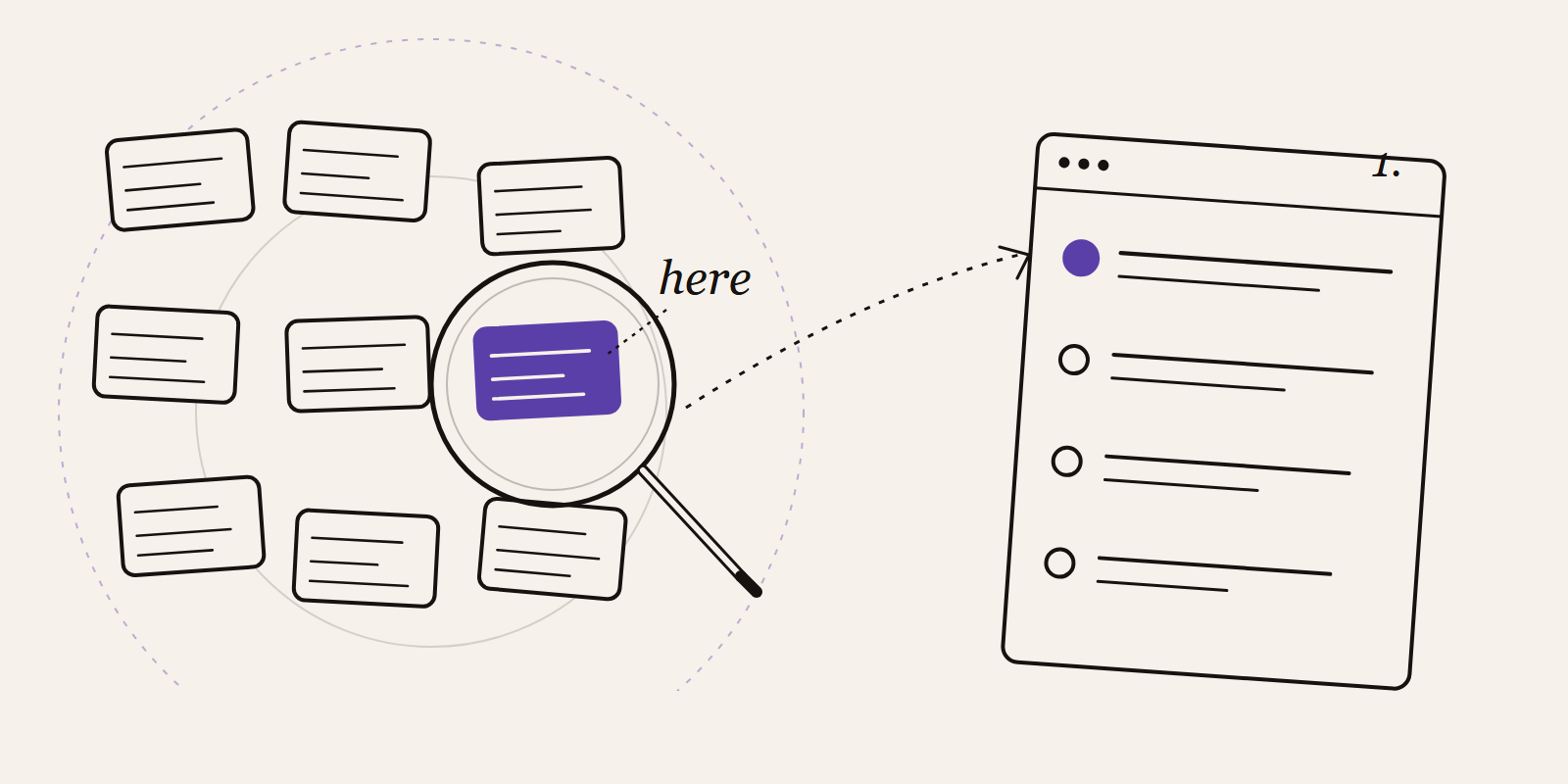

A data warehouse is a central database purpose-built for reporting and analysis. It collects data from different source systems (ERP, CRM, webshop, accounting) and structures it into a consistent model, keeping the full history. Analysts, dashboards, and AI applications pull their data from here without loading the underlying operational systems.

You can think of a data warehouse as the memory of an organisation. Operational systems live in the here and now: how much stock do you have today, which invoice do you send today. A warehouse answers questions about evolution: how has our revenue trended over three years, which customers churn after which interaction, how does this quarter compare with the previous one.

The classic definition comes from Bill Inmon: a subject-oriented, integrated, time-variant, non-volatile collection of data that supports decision-making. Those four properties still apply today, even though warehouses now look very different technically from those of the 1990s.

Management reporting

Monthly revenue reports, quarterly analyses, board dashboards. Classic, and still the biggest user.

Self-service analytics

Business users build their own reports in tools like Power BI without loading the operational systems or writing SQL against ten different databases themselves.

Historical analysis and trends

Operational systems overwrite old values (customer address, product price). A warehouse preserves the history so you can see how things evolved.

Data integration

One place where customer id from CRM and customer id from billing merge into a single customer view. Without a warehouse, every analyst reinvents that integration each time.

Foundation for AI and ML

Machine learning models need clean, historical, structured data. The warehouse is usually the starting point.

A classic warehouse has several layers, each with its own role.

Staging layer

Raw data from the source systems lands here with minimal processing. Its purpose is to limit load on the sources and make the rest of the pipeline restartable.

Integration layer or data vault

The data is cleaned, de-duplicated, and aligned around business entities (customer, product, order). This is where the truth shared by every report lives.

Presentation layer or data marts

This layer holds star schemas (fact tables surrounded by dimension tables) optimised for fast reporting. The Kimball methodology is the classic approach.

Two modelling philosophies dominate:

Kimball (dimensional modelling)

Pragmatic, business-friendly, star schemas per subject. Fast to build, good for reporting.

Inmon (normalised)

One large normalised enterprise model first, then data marts. More rigorous, but slower to deliver first value.

Technically, modern warehouses rely on columnar storage. Column-based storage keeps all values of a column next to each other. This is ideal for aggregations (sums, averages) over billions of rows and far more efficient than the row-based storage of classic transactional databases.

Both are databases, but their purpose and setup are fundamentally different.

An operational database (OLTP) supports daily transactions. Many small reads and writes, heavy normalisation to avoid redundancy, focus on current state. Think of the database under a webshop handling hundreds of orders a second.

A data warehouse (OLAP) supports analysis. Few but large reads, denormalisation to limit joins, focus on historical and aggregated values. Think of a report summarising three years of sales data by region and product category.

The two aren't interchangeable. Running reports directly against an operational database loads that system and slows the business down. Running transactions against a warehouse fails or is painfully slow. ETL or ELT pipelines therefore move operational data daily or continuously into the warehouse.

Over the last decade cloud warehouses have become the default. The main players:

Snowflake

Pure cloud warehouse, multi-cloud, decoupled storage and compute, easy data sharing between organisations.

Google BigQuery

Serverless, pay per query, deeply woven into the Google Cloud ecosystem.

Amazon Redshift

AWS-native warehouse, a natural fit if you're already on AWS, with Redshift Spectrum for querying S3 data.

Microsoft Fabric Warehouse

Part of Microsoft Fabric, built on OneLake, deeply integrated with Power BI and Azure.

Databricks SQL Warehouse

A SQL layer on top of a lakehouse, strong for teams that want to combine analytics and ML.

The choice between these platforms depends less on raw performance (they're all fast enough) and more on which ecosystem you already use, which tools your team knows, and how you want to organise data governance.

The rise of the lakehouse concept raised the question whether a classic warehouse is still needed.

A classic warehouse excels at structured data, predictable performance, strong governance, and straightforward SQL access. Less suited for unstructured data (documents, images) or heavy-duty machine learning workloads.

A lakehouse combines a data lake (flexible storage of all kinds of data in open formats) with warehouse-like features (ACID transactions, SQL, performance). A better fit for organisations that want one platform for BI and data science.

In modern architectures both often coexist. The lakehouse stores raw and semi-processed data for experiments and ML. The warehouse (or a structured layer inside the lakehouse) serves self-service reporting with strict definitions and strong security. Microsoft Fabric is a good example of a platform that brings both together in one environment.

A calculation group applies one DAX pattern to every measure in your model. You write YTD, MTD and YoY% once instead of repeating them for e...

Read definitionChange Data Capture (CDC) is the practice of detecting every change in a source system and forwarding it to downstream systems. It keeps you...

Read definitionData lineage shows the full journey data takes inside an organisation. From the original source to the final report, with meaning and contex...

Read definitionData mesh is an organisational model for data in which each business domain owns its datasets and offers them as products. It breaks with th...

Read definitionDAX is the formula language behind Power BI, Excel Power Pivot and Analysis Services. You use it to build calculations like totals, margins ...

Read definition

Ten practical steps to automate your business processes without AI hype. Start small, fix the process first, use the tools you already own, ...

Find the automation opportunities in your business that are actually worth building. A five-question test, the hotspots we keep seeing, and ...