Data lineage

Data lineage shows the full journey data takes inside an organisation. From the original source to the final report, with meaning and contex...

Read definitionChange Data Capture (CDC) is the practice of detecting every change in a source system and forwarding it to downstream systems. It keeps your lakehouse or data warehouse close to real time without copying everything again.

Change Data Capture, usually shortened to CDC, is the practice of detecting every change in a source system (insert, update, delete) and streaming it to other systems. Instead of reloading the full table every night, you send only what changed since the previous run. That scales better, puts less load on the source, and makes near real time synchronisation possible.

CDC shows up in nearly every modern data stack: replication between operational systems, feeding a lakehouse or data warehouse, syncing microservices, and real-time analytics on transactional data.

Think of CDC as a bookkeeper's daybook: instead of rewriting the whole ledger every evening, you note only the day's movements. Anyone who wants the current state reads the latest known version and applies the movements since.

Full-load ETL works fine as long as tables stay small. Past a few hundred million rows, three problems start to bite.

Source pressure

A nightly full load pins an OLTP database for ten minutes. For 24/7 operations that is not an option.

Runtime

Full loads keep growing while the refresh window does not. The business asks for fresher data while IT adds more hours to the ingest.

Cost

Cloud platforms charge by the minute for compute. Incremental loads are always cheaper than full loads.

CDC fixes all of this by shipping only the deltas.

Log-based CDC

The database writes every change to an internal transaction log (WAL in PostgreSQL, binlog in MySQL, CDC tables in SQL Server). CDC tools read that log and publish every change as an event. Big upside: minimal impact on the source and no application changes needed. Tools like Debezium, SQL Server CDC, and Fabric Mirroring all work this way.

Trigger-based CDC

You place database triggers that write every insert, update, or delete to a changelog table. Works in any database, but slows writes and needs maintenance on every table involved.

Timestamp-based CDC

Every table has a last_modified column. Your pipeline pulls rows changed since the previous run. Simple and cheap, but misses deletes and breaks if someone forgets to update the column.

Snapshot differencing

You compare a fresh snapshot with the previous one and derive the deltas. Always works, but slow and heavy. Sometimes the last resort when the source allows nothing else.

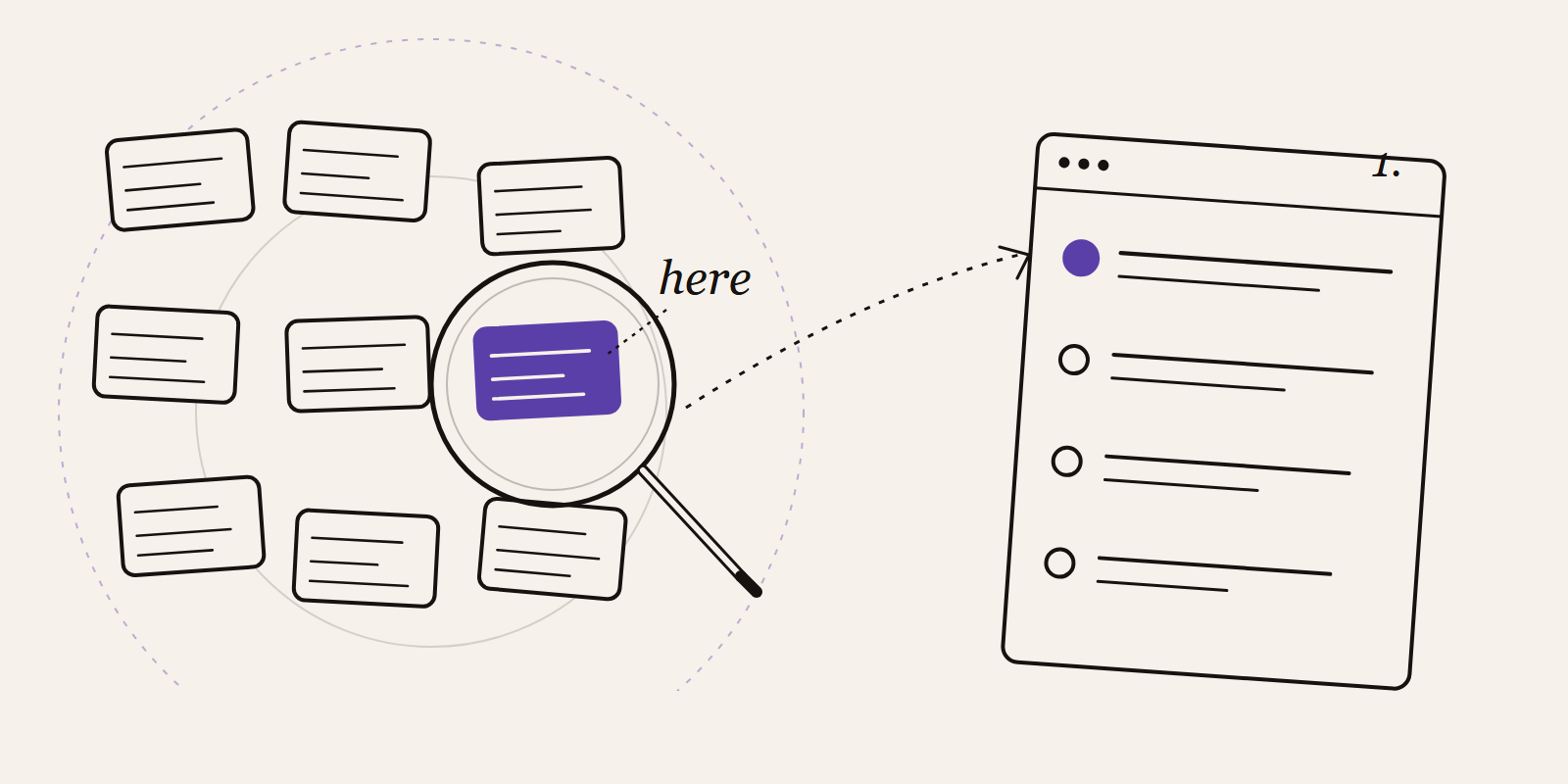

Capture

A CDC tool reads changes from the source. Mature connectors exist for SQL Server, Oracle, PostgreSQL, and MySQL.

Transport

Changes flow into a streaming layer like Apache Kafka, Azure Event Hubs, or Pulsar. That allows several consumers on the same stream.

Sink

Downstream systems subscribe: a lakehouse writes the changes as Delta tables, a search index updates its records, a microservice rebuilds a cache.

Apply

In the target, inserts are added, updates overwrite, and deletes are tombstoned or truly removed. Delta's MERGE INTO statement is a workhorse here.

Microsoft Fabric ships a dedicated feature called Mirroring that delivers CDC out of the box for Azure SQL Database, Cosmos DB, and Snowflake. Source changes appear as Delta tables in OneLake within seconds, without you building a pipeline. For other sources you can use the classic Data Factory connectors with an incremental load pattern, or Debezium via Event Streams.

Feeding a warehouse or lakehouse with operational data without stressing the source database.

Real-time dashboards on transactional data, for example sales per minute.

Microservice synchronisation, where a service keeps its own cache current based on events from another service.

Fraud detection where seconds matter: a suspicious transaction demands an immediate response.

Migration to a new system without downtime, by doing a bulk load first and then letting CDC catch up the deltas until you can cut over.

Schema changes break pipelines

Adding a column in the source does not flow through a CDC stream automatically unless your tool handles schema evolution. Plan data lineage checks in.

Missing deletes

Some CDC methods (especially timestamp-based) never capture deletes. Your target table keeps rows that have long since been removed from the source.

Exactly-once versus at-least-once

Streaming systems sometimes deliver messages more than once. Make your apply logic idempotent, for example through a unique change key.

Watch replication lag

Without monitoring you only find out late that the stream is falling behind. Set an alert on the delay between source commit and sink apply.

Data lineage shows the full journey data takes inside an organisation. From the original source to the final report, with meaning and contex...

Read definitionA data warehouse is a central database that collects data from many source systems and structures it for reporting and analysis. It's optimi...

Read definitionDelta Lake is an open storage format that extends plain Parquet files with transactions, schema enforcement, and time travel. It forms the f...

Read definitionDirect Lake is a storage mode for Power BI that reads straight from Delta tables in OneLake. You get the speed of Import without the refresh...

Read definitionETL and ELT stand for Extract, Transform, Load and Extract, Load, Transform. They are two ways of moving data from source systems into a cen...

Read definition

Ten practical steps to automate your business processes without AI hype. Start small, fix the process first, use the tools you already own, ...

Find the automation opportunities in your business that are actually worth building. A five-question test, the hotspots we keep seeing, and ...